When business conversations turn to uptime guarantees or disaster‐proof infrastructure, the term “Tier” always surfaces. These tiers—defined by the Uptime Institute—offer a common language for describing how resilient a data center really is.

Rather than arcane engineering jargon, the system boils down to four clear classes that balance reliability, redundancy, and cost. Below is a straightforward tour of Tier I through Tier IV, showing how each level raises the bar for power, cooling, and maintenance without burying you in technical noise.

Tier I – Basic Capacity, Best for Testing the Waters

A Tier I facility is essentially a well-organized server room with dedicated power and cooling, but no built-in backup for either. Everything runs on a single, non-redundant path: one utility feed, one cooling loop, one distribution system. That architecture keeps capital expenses down but accepts that scheduled maintenance or a surprise breaker trip will halt operations.

Tier I sites often serve startups, pilot projects, or regional offices where occasional downtime is tolerable and budgets remain tight. Because the layout is simple, staffing costs stay low, yet the trade-off is a maximum of roughly 99.671 percent annual availability—translating to more than 28 hours of expected downtime per year.

Tier II – Redundant Components, Fewer Nerves

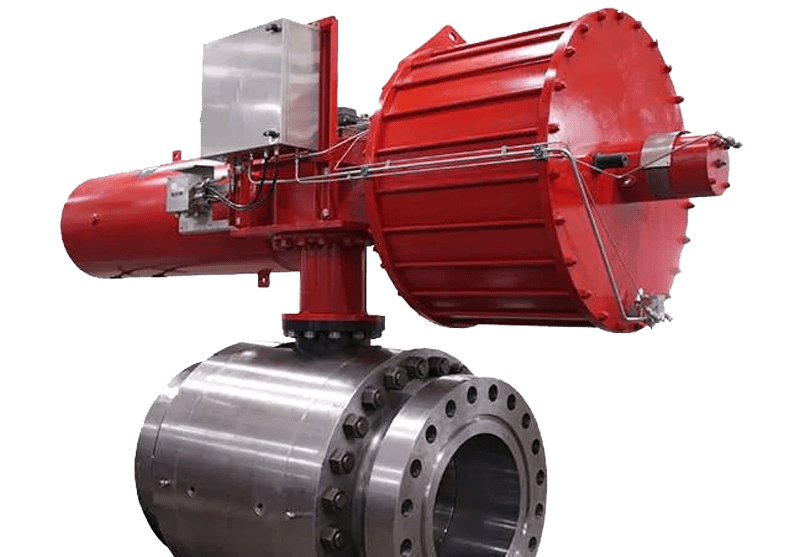

Tier II adds a safety net by doubling up critical components such as UPS modules, chillers, and fuel pumps while still feeding them through a single distribution path. The idea is to survive individual equipment failures without forcing a complete shutdown. For instance, one chiller can be serviced while its twin keeps the server hall cool.

This “N+1” philosophy pushes expected availability to about 99.741 percent—roughly 22 hours of yearly downtime—and is popular among midsize enterprises that need better reliability but are not yet ready for the cost or complexity of higher tiers. Installation remains fairly straightforward because the power and cooling paths themselves are still singular.

Tier III – Concurrently Maintainable, Business-Ready Resilience

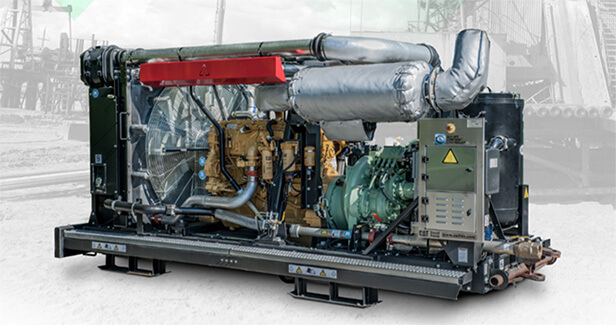

Tier III jumps from component redundancy to full path redundancy, creating two independent routes for power and cooling. Engineers can shut down an entire utility feed or cooling line for maintenance while IT loads stay live on the alternate path—no reboot, no angst.

A raised commitment to infrastructure symmetry means dual generators, separate switchgear, and segmented conduit runs, all of which inch availability upward to about 99.982 percent, or just 95 minutes of expected downtime annually. Many SaaS vendors and financial firms place production workloads here, balancing operational certainty with reasonably contained costs and energy footprints.

Businesses seeking this level of reliability can benefit from the high-performance environments provided by colocation data centers by Opus Interactive, which offer the scalable power and security necessary for modern enterprise demands.

Tier IV – Fault-Tolerant, No-Excuse Continuity

At Tier IV, the architecture assumes something will break at exactly the worst possible moment—then designs around that certainty. Every electrical and mechanical subsystem is fully redundant, and each path is isolated so that a failure on one side cannot cascade to the other. Moreover, equipment is arranged to guarantee instantaneous switchover without human intervention. Even physical layout decisions—such as adopting data center raised floors—support easier cable segregation and airflow management in this uncompromising environment.

The payoff is an impressive 99.995 percent availability, equating to barely 26 minutes of downtime per year. Tier IV suits mission-critical operations like global payment networks or life-and-death healthcare platforms where any outage carries steep legal or reputational risk, and budgets can justify the investment.

Conclusion

Choosing the right tier is less about bragging rights than about matching risk tolerance to cost. A small firm running nightly backups may thrive in Tier I, while an online bank cannot afford anything less than Tier IV’s fault tolerance.

Understanding how each classification layer redundancy, maintainability, and physical design makes it easier to argue for (or against) added investment when business growth or regulatory pressure pushes the bar higher. After all, in a world that never sleeps, the silent hum of a well-designed data center keeps every digital promise alive.